Infrastructure as Code

Bruno Devic // Monobit Solutions Ltd bdevic@monobit.co

What is it?

ONE OF THE ASPECTS OF ...

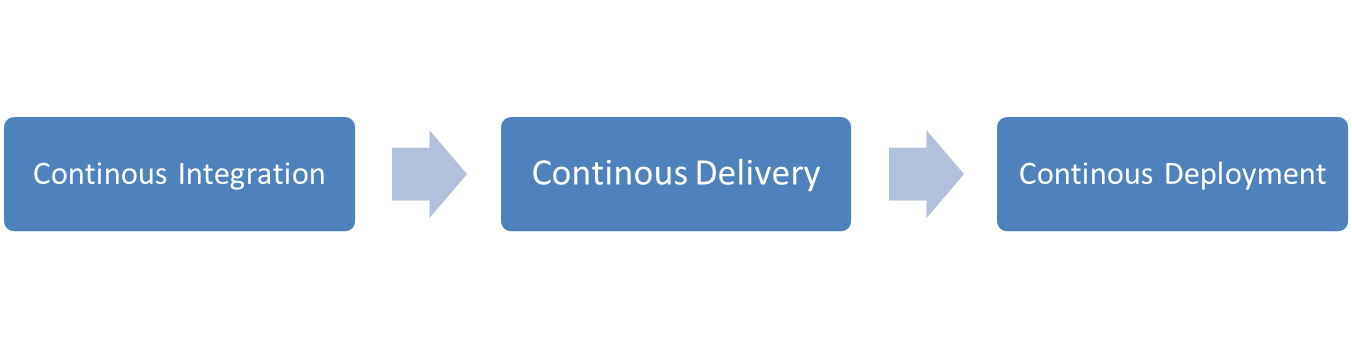

Continuous DELIVERY

Set of practices and principles aimed at, building, testing and releasing software faster and more frequently.

How long would it take your organization to deploy a change that involves just one single line of code? Do you do this on a repeatable, reliable basis?

TARGET

better project tracking

release frequently

done means done

reduce risk of release

increase quality

early integration

deploy less

reduce cycle time

better planning

build the minimum viable product

reduce WIP

BOTTOM LINE

releases must be boring

the system must always be releasable

every build is a potential release candidate

SOME PERSPECTIVE

WHERE IS ContinUous DELIVERY

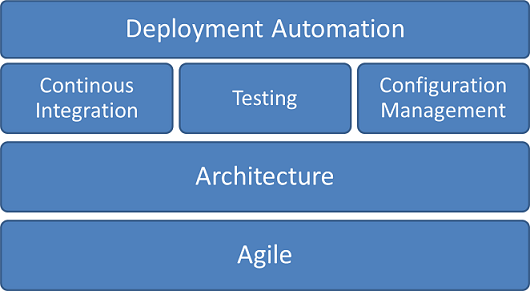

WHERE IS INFRASTRUCTURE AS CODE

NOT THERE YET...

CONFIGURATION MANAGEMENT

version everything

database

application configuration

infrastructure

dependencies

externalize configuration

have reproducable infrastructure

devops

Infrastructure as CODE

Building and maintaining infrastructure resembles the way software developers build and maintain application source code

Infrastructure code needs to be version controlled, tested, maintained, reviewed, deployed and crafted as regular source code

Why is it useful

single point of truth

versioning environments

standard format to describe infrastructure

fast deployment of infrastructure changes

scalable infrastructure automation

stop configuration drift

TOOLSET

VAGRANT 101

open-source (MIT) tool for building and managing virtualized development environments

synthetic sugar around VMs and provisioning

written in ruby

runs on windows, linux, mac os x

extensible via plugins

easy to use and configure

CONCEPTS

pre-packaged base virtual machine

virtualization technology running the VM

- virtualbox (default), vmware, aws, docker, lxc, kvm, cloudstack, etc.

alter the virtual machine to a desired state

- file, shell, ansible, puppet, cfengine, chef, salt, etc.

BASIC USAGE

vagrant box add base http://files.vagrantup.com/lucid32.box

// download predefined vm and register as 'base'

vagrant init

// create default Vagrantfile

vagrant up

// unpackage box, create vm from box, start VM and, if first run, provision vm

vagrant status

// check status of vm defines in Vagrantfile

vagrant ssh

// ssh to running vm

vagrant provision

// run provisioner(s) specified in Vagrantfile

vagrant halt

// stop a running vm

vagrant destroy

// stop and delete vm

vagrant reload

// restart vm using new Vagrantfile

vagrant suspend

// suspend vm

vagrant resume

// resume vm

BENEFITS

reduced setup time

isolation

each project has its own isolated env

CONSISTENCY

exact same vm available to all

REPRODUCIBILITY

up, destroy, up, destroy

DISTRIBUTABLE

easy to transfer to all targets

MORE ADVANCED EXAMPLE

# Vagrantfile to create images that run WebSphere Application Server

Vagrant.configure("2") do |config|

config.ssh.forward_x11 = true

ips = {

:was6nd => "10.10.10.4",

:was6sa => "10.10.10.6",

:was7nd => "10.10.10.2",

:was7sa => "10.10.10.7",

:was8nd => "10.10.10.3",

:was8sa => "10.10.10.5",

:was85nd => "10.10.10.8",

:was85sa => "10.10.10.9"

}

[:was6nd, :was6sa, :was7nd, :was7sa, :was8nd, :was8sa, :was85nd, :was85sa].collect do |version|

config.vm.define version do |was_config|

was_config.vm.network :private_network, ip: ips[version]

was_config.vm.provider :virtualbox do |vb|

vb.customize ["modifyvm", :id, "--memory", "4096"]

vb.customize ["modifyvm", :id, "--name", ENV['VM_PREFIX'].to_s + version.to_s]

end

was_config.vm.box = "basebox"

was_config.vm.box_url = "http://monobit.co/vagrant/basebox.box"

was_config.vm.synced_folder ENV["CATALOG"], "/catalog"

was_config.vm.synced_folder "../../scripts/files", "/librarian-files"

was_config.vm.provision :shell, :path => "../../scripts/librarian.sh"

was_config.vm.provision :puppet do |puppet|

puppet.manifests_path = "manifests"

puppet.manifest_file = "#{version.to_s}-install.pp"

end

end

end

end

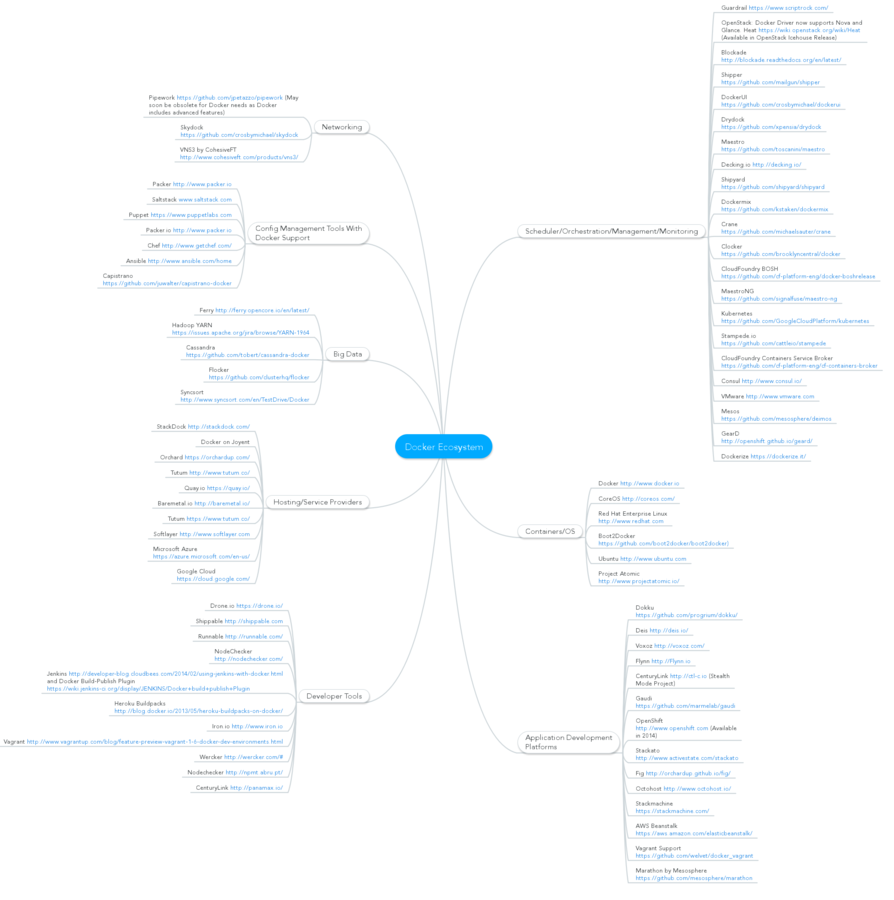

EcosySTEM

BOX REPOS

CONCEPTS

Declarative language

file, service, user, package

tell what state you want, not how

Idempotence

B(file1, file2, service1) -- no change --> B(file1, file2, service1)

CONVERGENCE

A (file1) -- state converges --> B(file1, file2, service1)

PUPPET 101

configuration management system that allows you to define the state of your IT infrastructure, then automatically enforces the correct state

Apache 2.0 open source, first created in 2005

declarative language based on Ruby

client-server and standalone deployment model

CONCEPTS

describes an aspect of a system - file, service, package etc., resource types and attributes are predefined

execution order must be explicitly defined

puppet program, has .pp extension, it contains one or more classes

classes are groups of resources, e.g. DB server, load-balancer; classes are organized into modules and are singletons

type { 'title':

attribute => value,

}

CONCEPTS CONT.

custom resource like types, very similar to classes but can be used multiple times

organize, distribute and reuse puppet code

my_module

- manifests # puppet code stored here (classes, types etc.)

- files # static config file

- templates #ruby erb config files

- lib # custom functions, facts, providers

- tests # simple manifest for manual testing

- spec # automated tests

class nginx {

# Install the nginx package. This relies on apt-get update

package { 'nginx':

ensure => 'present',

require => Exec['apt-get update'],

}

# Make sure that the nginx service is running

service { 'nginx':

ensure => running,

require => Package['nginx'],

}

# Add a vhost template

file { 'vagrant-nginx':

path => '/etc/nginx/sites-available/localhost',

ensure => file,

source => 'puppet:///modules/nginx/localhost',

require => Package['nginx'],

notify => Service['nginx'],

}

# Disable the default nginx vhost

file { 'default-nginx-disable':

path => '/etc/nginx/sites-enabled/default',

ensure => absent,

require => Package['nginx'],

}

# Symlink our vhost in sites-enabled to enable it

file { 'vagrant-nginx-enable':

path => '/etc/nginx/sites-enabled/localhost',

target => '/etc/nginx/sites-available/localhost',

ensure => link,

notify => Service['nginx'],

require => [

File['vagrant-nginx'],

File['default-nginx-disable']

],

}

}EXAMPLE

SOME RESOURCE TYPES

service { 'apmd':

ensure => 'running',

enable => 'true',

}package { 'ampd':

ensure => installed

}user { 'foo':

ensure => present,

uid => '507',

gid => 'admin',

shell => '/bin/zsh',

home => '/home/foo',

managehome => true,

}file { "/etc/init.d/tomcat":

source => "puppet:///modules/tomcat/tomcat",

group => 'root',

owner => 'root',

mode => 'ugo+x,go-w,go+r',

}group { 'foo':

ensure => present

}exec { "extract-tomcat-server":

command => "tar -xvzf 'apache-tomcat-8.0.8.tar.gz' -C /opt",

creates => "/opt/${tomcat_dir}",

}IMPORTANT METAPARAMETERS

type { 'some_type':

require => ...,

before => ...,

notify => ...,

subscribe => ...,

alias => ...,

schedule => ...

}

also possible to model via chaining operators -> and ~>

group { 'tomcat_group':

name => 'tomcat',

ensure => 'present'

} ->

user { 'tomcat_user':

name => 'tomcat',

ensure=> 'present',

gid => 'tomcat'

}

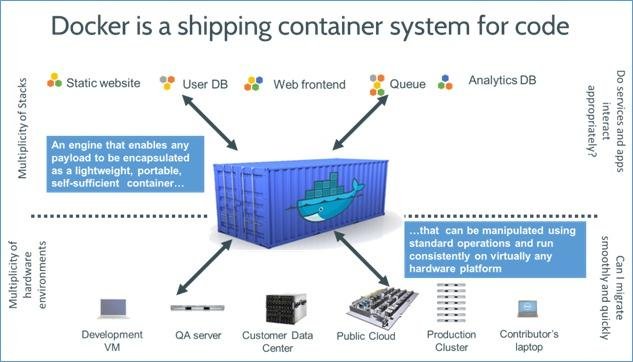

DOCKER 101

Docker is an open-source engine that automates the deployment of any application as a lightweight, portable, self-sufficient container that will run virtually anywhere.

Docker brings LXC to the masses

Docker first started in 2013 and is very HOT at the moment

500+ commiters

support from major tech companies (Google, RedHat, Microsoft, IBM, RackSpace etc.)

fast growing ecosystem

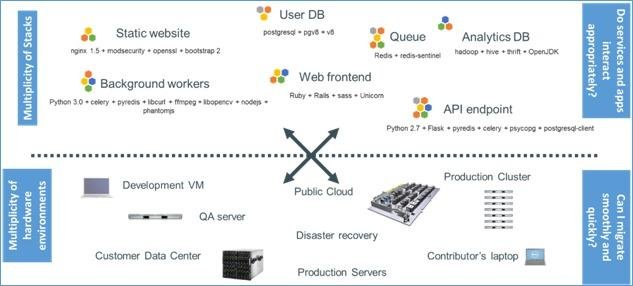

WHY CONTAINERS ?

DEPENDENCY HELL MATRIX

UNIVERSAL CONTAINER

CONTAINERS vs. VMs

VM without the overhead of a VM

Processes are isolated, but run straight on the host

BENEFITS

more efficient, consistent, and repeatable

no configuration drift between envs

you package it, i'll ship it

improved speed, reliability, scalability and portability

IMAGE STACKING, easy and fast to deploy changes

QUICK START

$ docker search ubuntu # search docker.io hub for all ubuntu images

// lots of results

$ docker pull ubuntu:latest # download image locally

ubuntu:latest: The image you are pulling has been verified

d497ad3926c8: Pull complete

ccb62158e970: Pull complete

e791be0477f2: Pull complete

3680052c0f5c: Pull complete

22093c35d77b: Pull complete

5506de2b643b: Pull complete

511136ea3c5a: Already exists

Status: Downloaded newer image for ubuntu:latest

$ docker images # list local images

REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE

ubuntu latest 5506de2b643b 5 days ago 199.3 MB

$ docker run ubuntu echo "Hello world" # run container, execute command and stop container

Hello world

§ docker run -i -t ubuntu /bin/bash # run container in interactive mode

root@b38ea8c7b345:/#dOCKER CLI

$ docker search # search docker.io hub

$ docker pull # download image

$ docker push # upload image

$ docker images # list local images

$ docker build # builds image from Dockerfile

$ docker rmi # remove image

$ docker ps # list containers

$ docker run # initiates a container from image

- d # run in daemon mode

- i -t # run in interactive mode

- p <host>:<guest> # expose port to host

$ docker start / stop / kill # control existing container

$ docker attach # connect to container

$ docker rm # remove containerMANUAL PROVISIONING IS JUST BAD

Dockerfile

instructions how to automatically build an image

FROM # defines base image

MAINTAIN # authod metadata

ADD # add local file to image

RUN # execute command

ENV # set env variable

EXPOSE # expose a port

CMD # default command to execute on run

VOLUME # create a mount point

SYNTAX

$ docker build -t <tag> .MORE ADVANCED SCENARIOS

linking containers together

$ docker run -d --name db bdevic/postgres

$ docker run -d -P --name web --link db:db bdevic/webapp python app.py

# exposes ports from db to webapp, creates env variables and updates hosts

Storing data with mapped data volumes

$ docker run -d -P --name web -v /src/webapp:/opt/webapp bdevic/webapp python app.py

# map /src/webapp from host to /opt/webapp, bypasses the union filesystem

Storing data with DATA CONTAINERS

$ docker run -d -v /dbdata --name dbdata bdevic/postgres # data-only container

$ docker run -d --volumes-from dbdata --name real-db bdevic/postgres

# mounts the /dbdata volume from the dbdata container